IBM has managed to dramatically reduce the number of quantum bits, or qubits, required to prevent errors in a quantum computer. Its latest approach to quantum error correction should bring down the number of qubits needed to build a useful quantum machine.

|

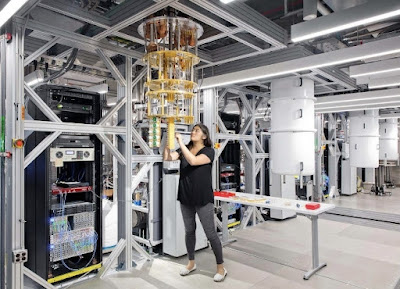

| One problem with quantum computers is that they have a high error rate Connie Zhou/IBM |

The biggest problem with today’s quantum computers is that they are noisy, meaning they have error rates around 1 in 1000, whereas classical error rates tend to be around 1 in 1 billion billion. This means that if you want to lower your error rate on a quantum computer, you need many additional qubits – potentially millions or even more – to check for errors and make sure that your final answer is accurate.

“The overall principle of error correction is redundancy,” says Ted Yoder at IBM in New York. “If I really want to send you a message and make sure you get it, one thing I can do is send it multiple times, and even if there’s a bunch of noise around hopefully you get every part of it.”

But with today’s technology, it isn’t possible to build and connect that many qubits. So Yoder and his colleagues have come up with a procedure that can correct errors while reducing overhead – the number of qubits required to keep errors low.

Their protocol is based on a set-up where each qubit in the computer is connected to six others via quantum entanglement so that each of the seven connected qubits monitors the others. The main front-runner in quantum error correction, called the surface code, connects each qubit to four others, making it easy to arrange them in a simple grid on the surface of a chip, but this new set-up requires two parallel grids to achieve the required level of connectivity.

This is slightly more complex and would require new types of chips and long-range connectors to connect the qubits on those chips, but it could reduce the number of qubits required to store information in a quantum computer by a factor of ten. The researchers calculated that it could achieve a level of error correction with 288 qubits that would require 4000 qubits using the surface code.

The technologies required to make this idea a reality are plausible and could be built within the next couple of years, says Jérémie Guillaud at French quantum computing start-up Alice & Bob.

But that doesn’t quite mean that we’re on the verge of completely fault-tolerant, error-free quantum computing. “Fault tolerance is so hard to achieve because implementing error correction alone is not enough,” says Guillaud. “The quantum systems actually need to be very good to start with for quantum error correction to work.”

As long as the original qubits have error rates below 0.8 per cent, this new system is expected to improve error correction dramatically. “Otherwise you’re building a house out of crumbling bricks,” says Yoder. An error rate below 0.8 per cent is not unreasonable in the near term.

“This is great news for the community seeking to store quantum information, but there is a question mark whether computations can be performed,” says Fernando Gonzalez-Zalba at the University of Cambridge. “The numbers [also] still feel extremely high for short-term applications.”

Yoder agrees that this new protocol isn’t quite ready for prime time – it still requires hundreds of qubits, whereas most current quantum computers have fewer than a hundred, and the researchers are still working on how to extend its utility from simply storing quantum information to performing actual computations. “We would certainly like to do computation and to do it with very low overhead, but this idea of constructing a more efficient memory is a step towards that,” says Yoder. “There are still hurdles to overcome, but we are excited about the direction that this opens up.”

Reference:

0 Comments